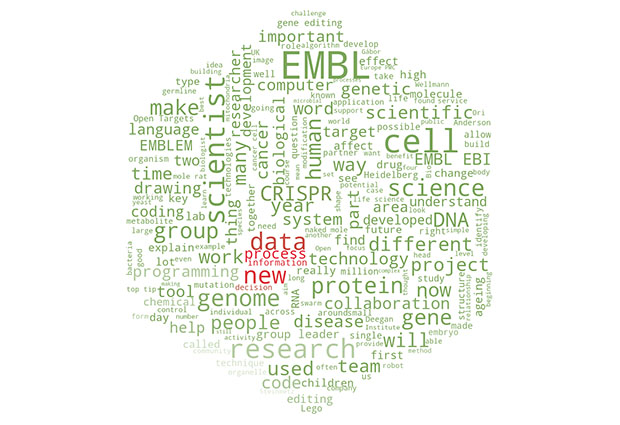

Programming: language

How computer processing of human language is harnessed by EMBL scientists

“We have 35 million records; that’s about seven times the size of English Wikipedia,” says Maria Levchenko, community manager at Europe PMC. “We’re in a very good position to utilise text mining.”

Hosted by EMBL’s European Bioinformatics Institute (EMBL-EBI), Europe PMC is a database for life science literature. It aims to provide free, worldwide access to scientific research. To handle its vast collection of textual data, Europe PMC is one of the increasing number of organisations capitalising on the technological gold rush of text mining: using computer software to comb through existing text and extract new knowledge.

One of Europe PMC’s main goals is to use text mining to accelerate scientific discovery. “You could be researching genes, proteins or organisms, and with our tool SciLite [scientific highlighter] you can see them at a glance,” says Levchenko. “We also link publications to data so it’s easy to go from one to the other.” These tools help scientists generate new insights from existing research, without spending the many lifetimes it would take to read all of the relevant scientific publications themselves.

Europe PMC is also a key contributor to Open Targets – a collaboration between industrial and academic institutions uncovering new links between genes and diseases. By combining genetic theory and text mining, Open Targets hopes to identify genes that could be potential targets for new disease treatments. “It’s a public–private partnership,” says Levchenko. “It’s companies working together to make discoveries happen, and the lion’s share of its new gene–disease associations comes from text mining here at Europe PMC.”

Reading between the lines

However, handling scientific publications presents a unique set of challenges. “Biology literature can be very messy from a text mining standpoint,” says Xiao Yang, Europe PMC’s text mining specialist. “There are lots of abbreviations, acronyms and big, ambiguous words.” Context is also important. “The same gene in Drosophila and humans has the same name,” says Levchenko, “but you often need to distinguish between these two very different things.”

There is also more to finding new gene–disease associations at Open Targets than just searching publications for mentions of genes and diseases. For example, when analysing “Gene A does not cause disease B”, the computer must be able to tell that the relationship is negative. In order for Europe PMC to overcome these challenges, their computers need a deeper understanding of how we communicate.

Any sort of input can be handled with the same computer science.

“Human language is ambiguous, fuzzy and imprecise,” says Katja Ovchinnikova, natural language processing (NLP) expert at EMBL Heidelberg. NLP is the area of computer science that translates the meaning behind our natural language – how we typically speak to one another – into a form that computers can understand and use. It often involves the use of machine learning algorithms that give a computer an ‘intuition’ for language, like a child learning their mother tongue. “You don’t say to children, ‘These words mean the same thing, these words mean opposite things,’” says Ovchinnikova. “They just listen to speech and pick it up.” Like children, the computers used by modern biologists learn how to understand our language by experiencing a million conversations.

Fluent microbe

The cornerstone of NLP is identifying how different words are related. One way to do this is by studying word context: which other words does a word often appear close to? Strings of words that appear together often enough to provide more information about a text than the individual words separately are called n-grams. For example, ‘New York City’, a 3-gram, tells you more about the locations of apartments in a database than its individual words.

n-grams can even be applied beyond conventional words. The Iqbal group at EMBL-EBI use n-grams – known as k-mers in computational biology – in their search engine Bitsliced Genomic Signature Index (BIGSI). BIGSI substitutes microbial genetics for human language, using genes as k-mers and their nucleotides as words. Seeing a gene for antibiotic resistance as a k-mer of its nucleotides and searching for it with BIGSI quickly shows you all of the datasets and species in which this gene has been reported before.

The big challenge when switching from human to genetic language is that new microbial genomes often contain new ‘languages’ that have never been seen before. BIGSI, unlike many NLP technologies associated with human language, was therefore developed with an emphasis on scalability to rapidly expanding vocabularies.

Beyond language

But why stop at words and letters? “Any sort of input can be handled with the same computer science,” says Ovchinnikova. She and the Alexandrov team at EMBL Heidelberg are using NLP algorithms to extract knowledge from a large-scale community knowledge base for spatial metabolomics called METASPACE.

METASPACE contains spatial maps of the metabolites in many types of tissue. Metabolites are small molecules that are used by cells to steer their internal processes such as energy production, anti-tumour activity or intracellular communication. Hundreds of scientists from around the world use METASPACE to share the metabolomics data they produce using MALDI imaging mass spectrometry (MALDI-IMS).

MALDI-IMS data represent a tissue as a 2D grid of pixels, each about the size of a typical cell. A laser is used to release molecules from the area of tissue corresponding to each pixel. These molecules are then sucked into a mass spectrometer and analysed. The resulting mass spectra reveal which molecules were present in each pixel.

Making sense of MALDI-IMS data can be a challenge. However, the team has found that the NLP algorithm Word2Vec, originally developed at Google to measure the relationships between words, is surprisingly well suited for mining the terabytes of data in METASPACE. Analysing spatial metabolomics data, it turns out, has a key similarity with analysing textual data: both aim to find patterns in datasets full of a large number of related but spatially distributed objects.

Cell.txt

Word2Vec uses a sliding ‘window’ that moves across a body of text and records which words are often found close together. “If two words occur in the same window, they are said to occur in the same context,” says group leader Theodore Alexandrov. “If words often occur in the same context, they are related.”

The algorithm has proved adaptable to a wide range of research topics. “We’re modelling a cell as a text document and a metabolite as a word in this document. We want to find functionally related metabolites, so we’re applying Word2Vec to the 2D spatial context of metabolites seen in the MALDI-IMS data.”

Metabolites that are identified together when a cell performs a particular task could be a part of, or related to, the same reaction or metabolic pathway. Cancer cells, for example, have their metabolisms reprogrammed and accumulate particular metabolites, sometimes called ‘oncometabolites’, that are remarkably different from those found in healthy cells. The team aims to use the data from METASPACE to build a Word2Vec-powered network of metabolite relationships. By looking at which metabolites are related to known oncometabolites, this network will hopefully allow scientists to identify new ones not yet associated with cancer.

Research like this has great potential for other applications in the clinic. But the current focus for Alexandrov and Ovchinnikova is to explore how combining NLP algorithms with biological techniques can help answer fundamental research questions. Indeed, these algorithms –and the computing power that drives them –are helping researchers throughout EMBL to take the science designed to help computers make sense of language and use it to deepen our understanding of biology.